|

John Asaro I am a senior at Connecticut College, double majoring in Computer Science and Psychology. I have experience in software development and research, with the majority of my recent projects being done in collaboration with the Autonomous Agent Learning Lab, of which I am a part of. Hopefully you are interested in some of my work, including both my published research and unpublished research projects. Email / Resume / ResearchGate / Linkedin / Github |

|

ResearchMy research is heavily focused on developing AI agents to solve problems. These problems include completing tasks or optimizing towards a goal, for example training agents to play the games XPilot, DOOM, and Ikemen-GO, and using evolutionary algorithms to generate hexapod gaits. In the past, I have also worked with Dr. Christine Chung in the field of computational social choice. |

Published Research |

|

|

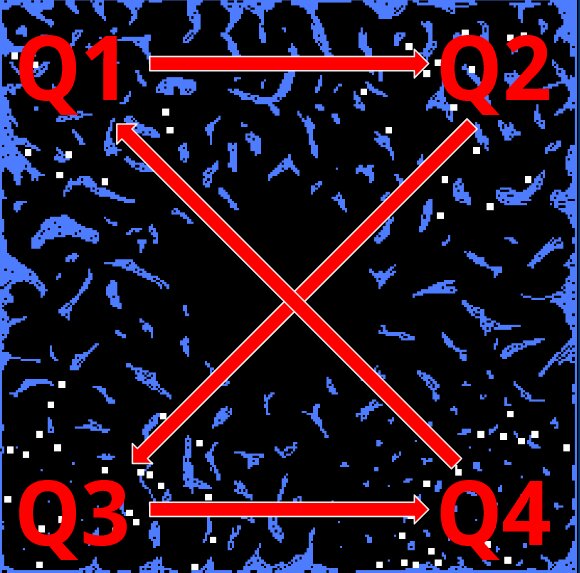

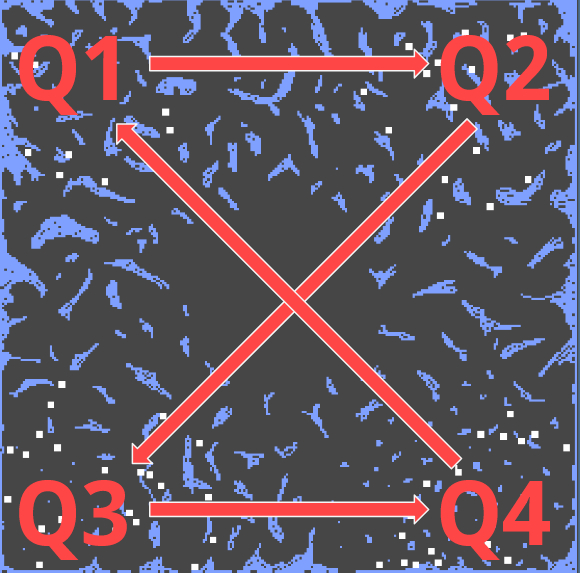

Niching Agents in the Core

Gary B. Parker, Jim O'Connor, John Asaro 17th International Conference on Evolutionary Computation Theory and Applications, 2025 Project Page / ResearchGate Abstract: The Core is a unique competitive co-evolution algorithm that allows agents to evolve autonomous control without utilizing a traditional fitness function. The agents evolve via local interactions through tournament selection, crossover, and mutation, producing offspring by evolving better controllers. Previous works have shown The Core's ability to evolve agents capable of combat and navigation in the Xpilot video game. This research expands upon that premise by niching agents to specific subsets of the original environment The Core was tested in. Our results demonstrate the niched agents capacity for success over agents niched to the entire system and agents niched to different sub-environments. |

|

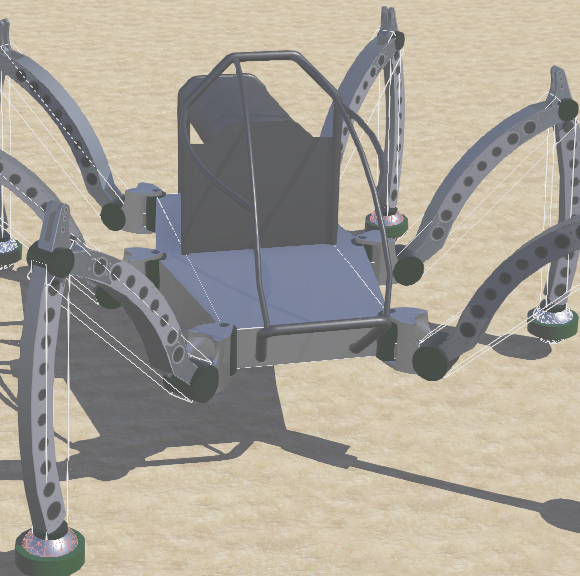

Decentralized Evolution of Hexapod Gaits with Independent Leg Controllers

Gary B. Parker, John Asaro, Jim O'Connor 17th International Conference on Evolutionary Computation Theory and Applications, 2025 Project Page / ResearchGate Winner of ECTA 2025 best poster award! Abstract: This paper presents a novel approach to hexapod locomotion by evolving each leg's gait independently through a decentralized evolutionary algorithm. Using the Webots simulator and the Mantis hexapod robot, we optimize individual leg controllers without centralized coordination, allowing emergent behaviors to drive the development of efficient, coordinated locomotion. Our decentralized method is benchmarked against cooperative coevolution, demonstrating improved efficacy in generating stable and adaptive gaits while showing interesting emergent coordination. By enabling independent evolution of leg controllers, this method reduces the complexity of gait optimization and highlights the potential of decentralized strategies for scalable and adaptive robotic systems. |

Unpublished Research |

|

|

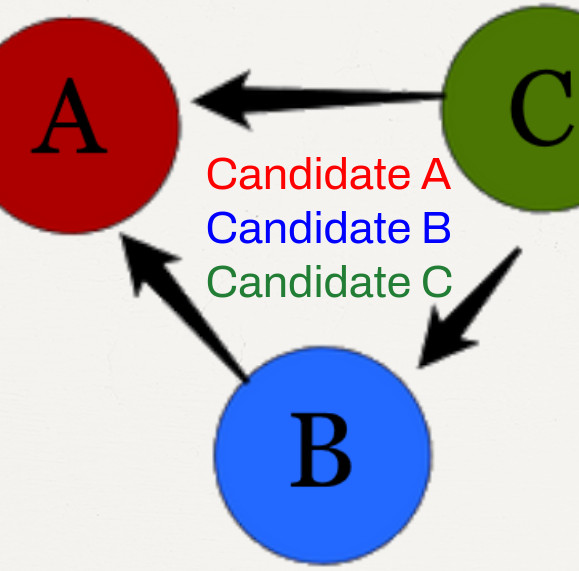

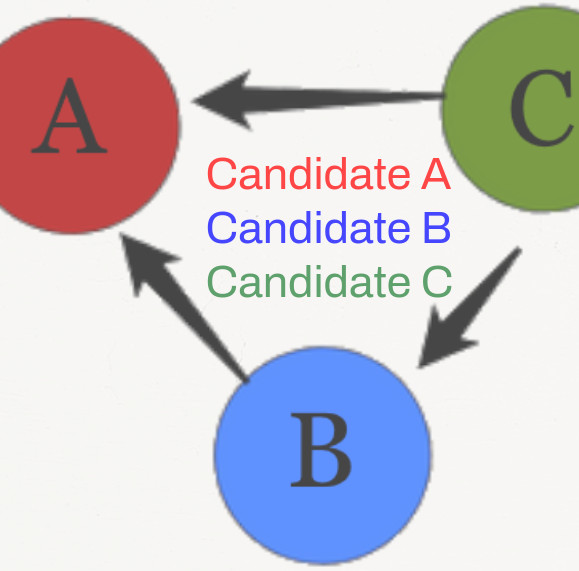

The Merits of a Voting Rule Based on θ-Winning Sets

John Asaro, Christine Chung Summer Science Research Institute (SSRI) colloquium, 2024 Project Page / ResearchGate (Poster)

Description: Research done in collaboration with Dr.Christine Chung on developing a voting rule (essentially an algorithm that will decide the outcome of an election) that produces the socially optimal outcome for a group in both single and multiwinner elections. Through empirical testing we found that our voting rule produces the socially optimal outcome more often than all other well known voting rules we tested against. Done as part of the 2024 Summer Science Research Institute (SSRI) program at Connecticut College and presented at the SSRI colloquium as a culmination of the research done. |

|

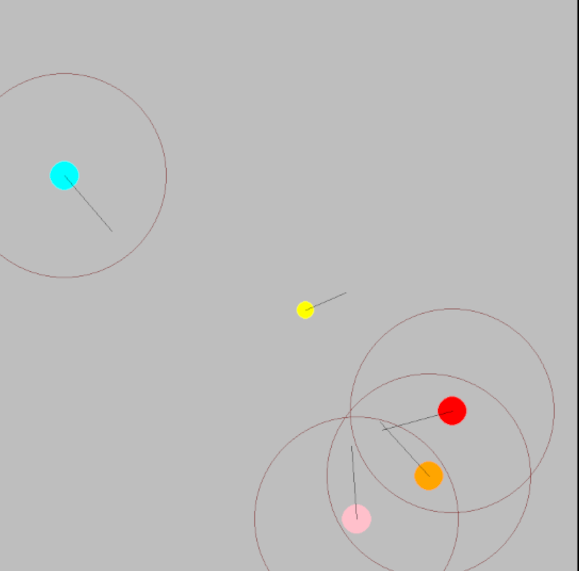

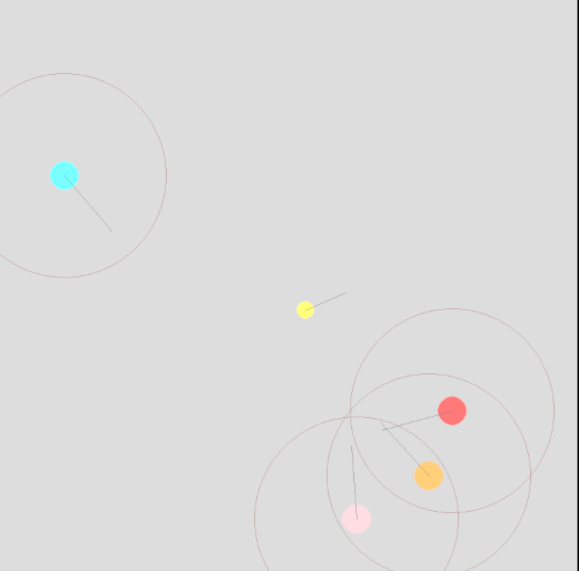

Predator Prey Decentralized Cooperative Coevolution

Gary B. Parker, John Asaro, Jim O'Connor Project Page Predator Prey Decentralized Cooperative Coevolution is an extension of the previous research done by in Decentralized Evolution of Hexapod Gaits with Independent Leg Controllers. We have successfully extended this work to a 2D Predator Prey Scenario, and intend to use this environment for further research using the Decentralized Cooperative Coevolution method. |

Projects |

|

|

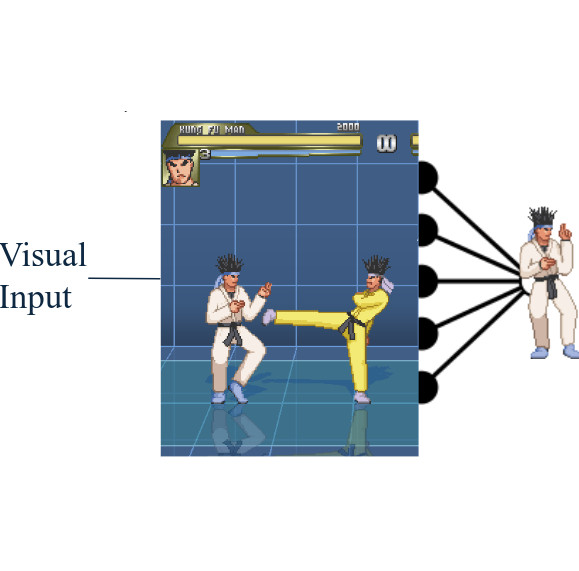

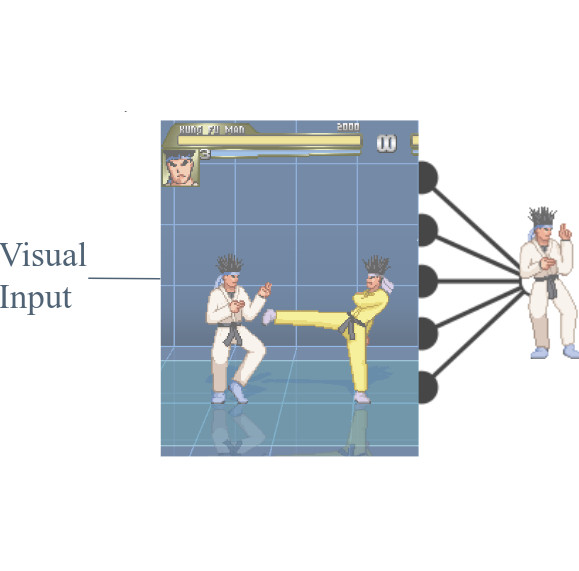

Viz Ikemen

John Asaro Project Page Description: Viz Ikemen is a game AI enviornment that can be used to train bots to play the game Ikemen GO entirely with visual information. Ikemen GO is an open source fighting game built in the GO programming language. Viz Ikemen uses the games "VS" mode as an enviornment to train an agent learner to battle the CPU opponents that exist in Ikemen GO. Note: This was originally developed as a tool for the Autonomous Agent Learning Lab, but later become more of a personal passion project as this environments' similarity to DareFightingICE limits its publishability. That being said, the Viz Ikemen enviornment is effectively finished and works exactly as described. However it likely will not be expanded upon aside from me cleaning up the documentation eventually so that anyone can use it easily and packaging it, which has been on my todo list since October of 2025. |

|

Inspired by Jon Barrons' website. |